IN SHORT: If you have to run AniStudio, do so in an offline VM. The creator (or, at best, someone willing to defend him 20 hours a day) expressed his desire to dox anons multiple times, so you shouldn't take any risk.

Ani AKA AnimAnon AKA the biggest /ldg/ schizo of 2025 is a relentless ban-evading spammer that shitposts threads into unusability with the goals of:

- Faking organic support for himself and his image gen UI AniStudio

- Faking organic criticism of perceived competitors to AniStudio (primarily ComfyUI)

- Editing the OP to advertise his UI or remove links related to its competitors

- Removing this page from the OP

(Quick links to threads that had ~310 posts by him that got IP-wiped: 1 2 3 4 5 6) (and many more at this point I'm not sure it's worth tracking further)

AniStudio is an AI image gen frontend developed by him. It has no observed user on /ldg/ outside of himself. There wasn't even a chance for a long while of it having any user, since Ani is an inexperienced dev with little knowledge of software delivery pipelines, and AniStudio was unrunnable on other people's machines for a long time until another developer offered to fix it for him. (Ani took credit for the fixes and belittled the good samaritan.)

Ani has an exceptional lack of self-awareness, and most /g/ regulars are able to spot him. This is because he has an unshakeable tendency to say things only Ani would know about himself, or things only he would know about AniStudio, or conspicuously pose as regular AniStudio users despite there being zero indication of organic AniStudio usage (AniStudio gens that aren't his avatar, AniStudio usage questions, etc.). He once forgot to remove his tripcode while spamming. He also once praised himself, not realizing his attached screenshot identified him. He was also caught botting polls. (Full thread for context.)

UPDATE - Jan 26 2026

Ani was banned and IP-wiped, and his gens were wiped, alongside 95 spam posts all made in the span of only 3 hours, all with the same deletion time. Click here to see an image showing the deletion times as well as some examples of deleted posts. (As expected, they include examples of: patting himself on the back in third person, blaming some other users, spamming links to his own dead thread, racist spam, hostility towards anyone posting gens.) You can also check out the desuarchive links directly:

UPDATE END

One recent example of his spam, out of many:

As you can see, he isn't very subtle when patting himself on the back in third person.

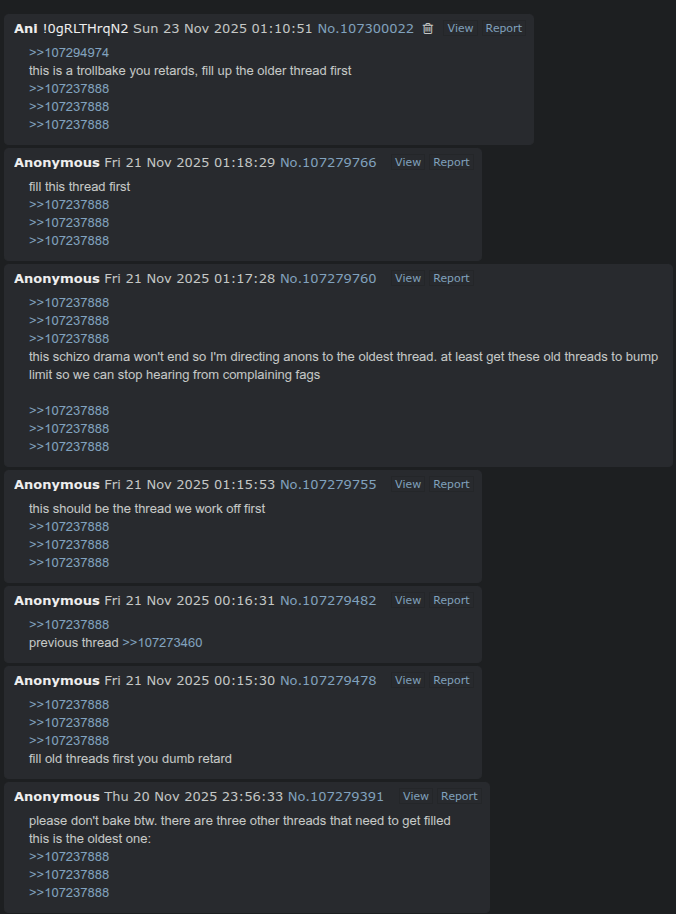

Whenever Ani tries to post /ldg/ OPs with AniStudio in them (or without this page), most anons entirely ignore it, going to the regular thread instead even when the one with AniStudio was created first.

That doesn't stop him from relentlessly spamming links to his own deserted threads to try and get people to use them:

Screenshot truncated. Click to see all 21 posts linking to his ad-thread. Yes, he forgot to remove his tripcode in that last post.

He's also recently taken to spamming his own deserted thread with images reposted straight from the main thread to make his look active, see: his thread, the original thread. He also continually threatens to keep spamming until people use his threads (example 1, example 2).

It should be noted that there obviously are a lot of genuine criticisms for ComfyUI or other frontends. Unfortunately, Ani likes to latch onto those, amplify them and use them to shitpost AI threads into unusability.

The best tactics when dealing with him are to ignore him and avoid derailing the thread. However, the tiniest hope of perhaps getting an unsuspecting newer user to try AniStudio can usually motivate him to keep shitposting threads into oblivion. For this reason, it is wise to occasionally call him out to let newer posters know about his antics.

Here is a recent example of his spam, click the screenshot to see all 41 deleted posts in one /ldg/ thread:

(desuarchive) (other thread with also ~40 of his posts deleted) (and another one with ~70 of his posts deleted) (30) (30) (100)

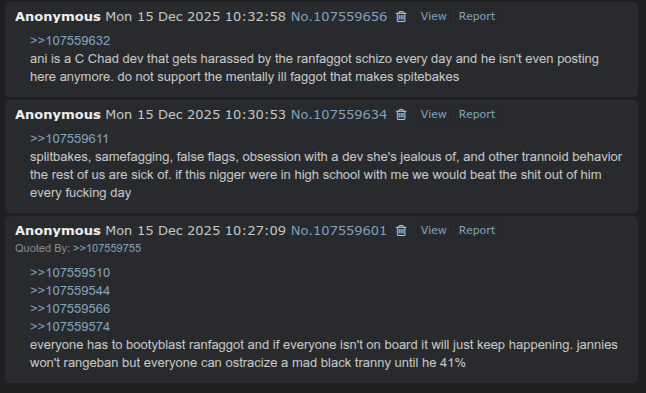

Considering his history of mental instability and violent language (as in the above example), as well as calls for doxing, any software written by him should be considered unsafe and only run inside an isolated environment such as a virtual machine.